Mulesoft Milestone Demo: Mule 4 and Studio 7 - Highlights & Discussion (Part 2 of 3)

In this post I will discuss the highlights of the Mulesoft's webinar - Mule 4 / Studio 7 Beta Walkthrough. This webinar is the second part of a three part series that Mulesoft is providing to introduce and drive beta testing of the new Mule 4 platform that includes improvements to the Anypoint Platform, release of Studio 7 and release of Mulesoft engine 4.

FAQ Review

- Mulesoft is looking for feedback to what they have changed in mule 4. I will be downloading and trying out some of the new features and look for additional posting as I dive using

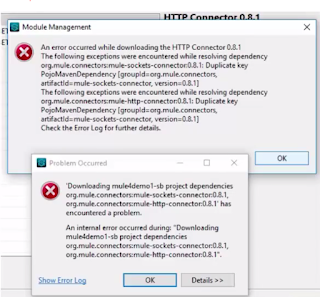

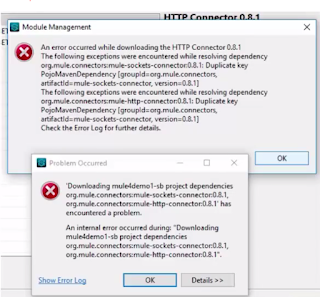

- Some users are having issues with new Studio with no connectors and this is caused by not having the JDK in the system path before any jre installations. Studio 7 requires the additional tools from the JDK to run all the components. I hope this is called out in the documentation or a check is added to the start up of Studio 7 letting the users know. Here is a screen shot of the error from the webinar:

- Mulesoft now has a page tracking the changes for Mule 4 - https://mule4-docs.mulesoft.com/mule-user-guide/v/4.0/mule-4-changes

- The next Mule 4 Beta release will focus on - Mule SDK, CE distribution with DataWeave(This could be an interesting development and might allow for a hybrid license/open source deployment models), APIkit DataSense support, Scripting components, API Gateway, DataWeave support for COBOL copy book/flat files and new connectors

DataWeave Expression Language

- Why DataWeave?

- memory handled by the egnin

- random access

- concurrent access

- cache payloads if larger than memory

- reduce steps in flow - don't have to learn MEL and Java to get access to data

- When to use DataWeave?

- Mulesoft's opinion is that integration logic should be broken apart for separation of concerns, testability, maintainability and using the best tool for the job. I agree with the

- Flows - should only handle sequencing of data and the operations of data. I do agree that this makes sense that flows are well suited to control the flow of the data and operations against the data. This really shines in the studio with the ability to create a visual shell of how data and operations to data would work without writing a line of code.

- Expressions (DataWeave) - Mulesoft has optimized DataWeave for data and with built in streaming it makes it easy to have random access to the data throughout the flow, the code will be less verbose and easier to maintain

- Code (Java Classes or Groovy, JavaScript (Rhino), Python, Ruby, or Beanshell) - Mulesoft recommends externalizing the code outside of mulesoft and allowing mulesoft to make calls to it. This really seems no different than the API Led Connectivity and if your calling code it really should be built as a micro service that could be fronted by a Mulesoft API or not.

- Calling Java code from DataWeave

- The webinar does a review of how to call Java code from DataWeave, however I would avoid doing this so the DataWeave expressions focus on data and not business logic which can be built in.

- Simplified Precedence Rules

- All operators and traits are functions in Mule 4 will make it easier to understand DataWeave

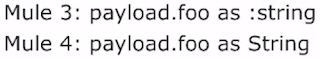

- The style for name types seems cleaner to me, but I think this is really just a personal preference on this change

- The additional parentheses will drive developers up a way, but I think in the end we will actually appreciate the auto complete when building complex DataWeave operations

- Typing for Variables/Function Parameters

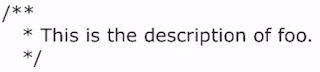

- Multi Line comments is now supported!!!!

Streaming

- Payload is read into memory as it is consumed

- Concurrent & random access is enabled

- Streaming defaults can be customized on a stream strategy

- File Store (This approach is actually faster than in memory)

- half MB stored in memory and rest is stored to disk and read in in half MB chunks

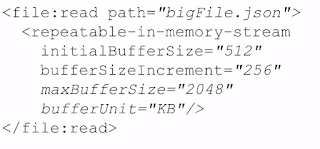

- In Memory

- half MB stored in memory and is incremented

- Example how to configure the stream:"

- Streaming can be disabled

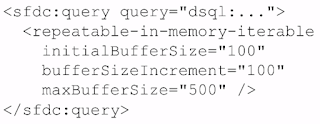

- Streams can work for object as object streams

Execution Engine

- Processing strategies and exchanges no longer needed

- Flows are non blocking

- Flows are synch by default (Same as Mule 3)

- Use async instead of one way exchange pattern

- Global thread pools for all flows

- These pools can be configured

- Thread pools are now built around I/O, Light CPU and Heavy CPU and the number of threads is optimized for you

- Controlling Concurrency - Coming soon in a release candidate and will be controlled at the flow level and it is not controlled at the thread pool level

- This removes processing only one message at time for that use case

Additional information:

No comments:

Post a Comment